Optimizing reservoirs and developing an ever-evolving intelligent model of a reservoir are key concerns for operators, particularly in challenging times. For that reason, they must have good production geologists on hand. A production geologist bridges a number of disciplines, most notably geology and engineering, but also geochemistry, geophysics, and numerical methods. Welcome to an interview with Terngu Utim who discusses production geology, its new potential and opportunities.

Optimizing reservoirs and developing an ever-evolving intelligent model of a reservoir are key concerns for operators, particularly in challenging times. For that reason, they must have good production geologists on hand. A production geologist bridges a number of disciplines, most notably geology and engineering, but also geochemistry, geophysics, and numerical methods. Welcome to an interview with Terngu Utim who discusses production geology, its new potential and opportunities.

What is your name and relation to the oil industry?

My name is Terngu Utim and I am a geologist and geomodeller at XPSG LLC, an exploration and production services company. I did my graduate studies in Petroleum Geoscience at Royal Holloway University of London and have been in the oil industry for over 16 years. I started off with a brief stint at CoreLab that opened my eyes to core scale reservoir characterization, then I got an opportunity to join Shell where I was deployed as a Production and development Geologist. After 6 years at Shell developing and managing large fields, I joined Roxar in Houston. At Roxar I spent a lot of time working on Geocellular models, improving geoscience software and training people. In 2011, I joined Nexen Petroleum in Dallas and initially worked in Technical Assurance and later in the Production Group in Houston. I co-founded XPSG, a consulting company in 2015 and we have been providing subsurface geoscience services, novel Analytics-based solutions and helping companies' setup adaptive Learning systems that embed learning and knowledge management in today's manpower-depleted oilfield.

How did you become interested in production geology?

My first posting at Shell was to an Asset Team as primarily a Production Geologist, where my role was to understand subsurface controls on reservoir performance within a field, characterize the geology, build models and come up with ways to increase the field's production.

With more information, actual data is used to refine/review conceptual geological theories.

The Production Geologist has a lot of information to work with, the challenge is to fit large volumes of data acquired at different scales and frequency into a consistent interpretation.

I had to integrate a lot of disparate data which I later found to not be the case on the exploration side so the relative abundance of data helped me develop and manage my reservoirs with a lot more rigor, also many of my recommendations were implemented in a relatively short time and seeing the results was rewarding. Production Geology requires integration within a subsurface team with other disciplines and this helped me build a holistic understanding of the subsurface.

What are some of the new techniques for production geology?

One of the goals of production geology is to understand and describe the rock properties and flow geology of reservoirs, so we process reservoir rock, fluid and production data into geospatial attributes that are used in models and maps to describe and predict reservoir behavior. Two of the biggest trends in Production geology are the enhanced use of Analytics and Real-time monitoring.

Advances in computing and our ability to collect and manage big data have had a big impact on the oilfield, recently we did a study of 30 operational US startups (Fig. 2) that are all Post-2010 and it reveals the shift from standalone technology (hardware and software) to networks that sit on top of big data analytics platforms while transmitting real-time data and in some cases solutions derived through Machine learning. So the IT revolution is impacting the oilfield in ways that require that the Production geologist also become a skilled data scientist, able to process and integrate a lot of geospatially-aware data and using advanced analytics to predict outcomes or flag anomalies on the fly.

A "30-Under 5" Triangular plot of US O&G service sector startups illustrates the decade's prevailing trend towards Analytics and Real time data support for Remote Operations. Big data is sometimes analyzed on the fly for its descriptive predictive and prescriptive value.

ANALYTICS IN PRODUCTION GEOLOGY

We are able to collect, store and analyze huge volumes of structured and unstructured data, a far cry from the days of spreadsheets. Data is now acquired at very high frequency and analyzed (sometimes on the fly) for its descriptive, predictive and prescriptive value.

Analytics and Machine learning helps geologist achieve higher repeatability and success in diverse fields like Quantitative seismic geomorphology and well site operations. Also the statistical tools in Analytics have transformed the speed at which we systematically reduce the acoustic data, multicomponent elastic data and dynamic production data we are able to gather from our fields. These new techniques help us translate more data into maps and better 3D geological models.

REAL-TIME MONITORING IN PRODUCTION GEOLOGY

As our ability to collect and store large volumes of operational data keeps improving, we are faced with a need to rapidly analyze and make decisions based on the data. Production geologists faced with a steady stream of real time data have turned to Machine learning and other analytics techniques to systematically reduce the "time to decision". From wellhead/node based production monitoring to field wide reservoir monitoring, conventional methods such as production data and occasional well testing have been supplanted by continuous monitoring mechanisms that began with down hole gauges and now include buried seismic receiver arrays on land and at the ocean bottom, continuously or occasionally streaming data that helps reservoir managers intervene as early as possible.

TRENDS IN STATIC MODELLING

Free of computing limitations but having to do more with less, many companies have shifted from detailed full field geological models to smart models designed to address specific challenges in-order to reduce model turnaround time. In several Shale Play companies where small teams combine managing hundreds of producing wells with supporting ongoing well operations, loop scale models populated with seismically-derived reservoir and geomechanical properties are combined with non-technical factors to produce common risk element block models which act as the teams regional perspective models and complement 'facies variation' scale models that are built to support finer scale well operations like Geosteering. This is a departure from the old view that 3D static models are an optional and mainly visualization tool in favor of the view that it is a 'common project implementation platform' for large scale development execution. These models are updated with data from ground based wireless sensors and are connected to Analytics platforms where they also provide the knowledge necessary for 'machine learning' based decision making during geosteering and stimulation operations.

What are the software programs that you use?

In keeping with the diverse demands of Production Geology, I've had to adapt to different software depending on the needs of the task at hand. For modeling and geoscience tasks I use Petrel, RMS, Petra, Kingdom, Kappa-Rubis and DSG; for data science, I use Excel, Spotfire, Crystal ball, Azure and R Studio; for Learning management solutions, I use Moodle, ATutor and Ilias; for certain specialty tasks, I use whatever is available so long I can export my results to the more common platforms.

What are some innovative approaches being used in reservoir revitalization?

To revitalize mature fields, innovation is required across the spectrum of field development activities. Key elements in this process include developing an updated understanding of the subsurface, choosing the appropriate reservoir intervention technology, and the use of Agile execution strategies to drive down cost through process efficiency, improved execution-time and responsiveness.

In the course of production, many fields reveal flow behavior that differs from initial predictions, differences could be production-induced, product of the interaction with stimulation fluids, missed or unknown reservoir characteristics or a combination of reasons. Over the course of field life, there frequently arises a need to locate the missed or bypassed pay (LTRO) and the conventional approach has been to rely on depletion patterns observed in history-matched dynamic models but predictions based on these models can come with a wide band of uncertainty and history matching is frequently non-unique, so newer methods that involve actual reservoir-wide measurements are preferred and this has contributed to the growth of technologies for monitoring reservoir-wide behavior in production time.

Wireless seismic sensors are used for passive as well as active time-lapse seismic monitoring in deepwater using ocean bottom nodes and onshore with microseismic sensors. In addition to seismic, novel techniques of inverting 3D EM data has also promoted the use of EM data to produce Resistivity cubes which can provide additional insight into the lateral distribution of remaining hydrocarbons in mature fields.

Some of the innovations in reservoir characterization include Fluidics-based modeling of fluid interactions at the pore scale using Physical models and the use of high definition CT imaging and logging of whole core or RCAL samples to generate digital models. These new practices help production geologists recreate better physical and digital representations of the reservoir for quasi-realistic modeling of fluid interactions in reservoirs under secondary or tertiary recovery. In some cases these CT images have been used in core-based characterization of shale plays and in the building models of representative elementary volumes (REV) for multi-scale flow modeling testing.

Realtime monitoring of hydrocarbon flow into, within and out of processing facilities has been going one for over two decades, but the unique challenge of optimizing field development in Unconventional Shale Plays has inspired the development of realtime monitoring tools that connect the subsurface evaluation platform to drilling, completions and production. This means that the evaluation team functions from a common platform that has realtime access to drilling and completion operations as well as production operations. Advances in real time or time lapse monitoring using passive seismic sensors has allowed for the monitoring of changes in reservoirs during stimulation, production or injection. In unconventional reservoirs, passive microseismic arrays are used to evaluate the effectiveness of the Fracs, monitor completions and production and even used to determine the size and location of unstimulated rock volume, before a for a Refrac. Resistivity volumes inverted from Electromagnetic Surveys are also used in monitoring production performance in mature reservoirs up to 10000ft deep.

The use of Agile methods in technical reviews, project planning and execution are becoming more common as teams seek to learn rapidly as they continuously optimize processes and decisions through the field development life cycle. This need to be Agile has contributed to the rise in real time data gathering and the use of Analytics and machine learning on the oilfield.

How do you develop a workflow that you can trust? What are the decisions you make when you determine the sequence?

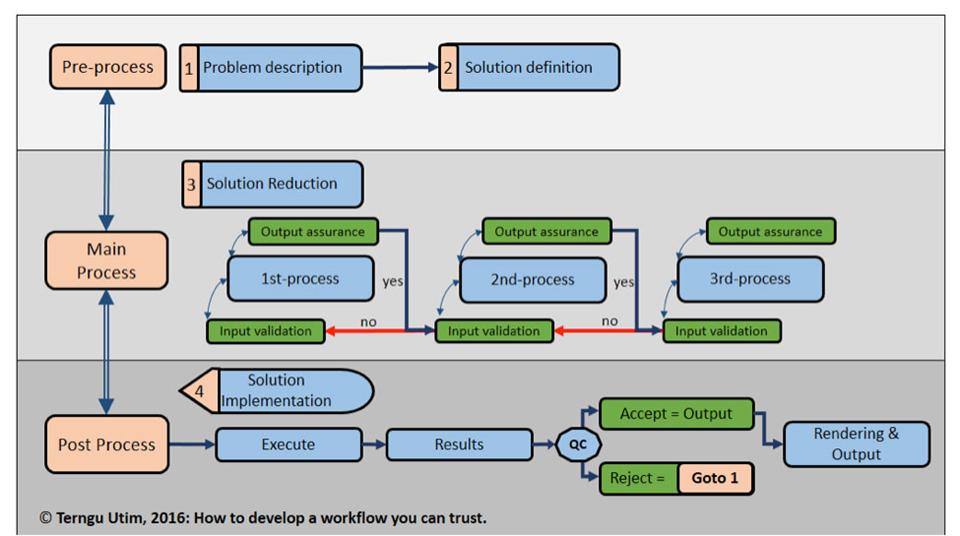

My toolkit for building workflows consists of a chart and a set of basic rules.

Basic rules:

- Good workflows should have a simple idealized sequence

- Good workflows are easy to customize

- Good workflows should allow recursive loops and branchings

As my chart shows, I/O and transitions are key because you want to ensure consistency.

I always define the purpose of my workflow by framing my problem and solution space very early. Solution reduction is where the granular sequential process happens. It is easy to define and reduce a well posed problem so framing is the foundation of a good algorithm. Validation and assurance are mid-process QC protocols that are less elaborate than the results QC, they performs lesser roles like ensuring unit consistency.

What are the steps you take when determining where to drill in a mature field that may have economic locations between existing depleted wells?

Broadly speaking there are 3 main steps namely

- A Gap Analysis to identify the volume and location of the mobile oil that remains within compartments of the mature field.

- An Intervention Analysis to establish the costs and risks associated with developing the remaining oil.

- A Project execution review where the entire project including non-technical aspects such as commercial and ability-to-execute factors are evaluated and de-risked.

These steps apply to conventional as well as unconventional projects however the nature of the accumulation in conventional reservoirs is different than those in ultra –low perm unconventionals so my workflow for mature convention fields is different than what I use in mature Unconventional fields.

Can you recommend a few books or articles?

From a technical perspective, the "Oil field production geology" and "Development geology Reference manual" are good books that will introduce the reader to technical concepts while collaboration papers at AAPG or URTEC showcase real world examples.

At its heart, geology is the science of finding anomalies and recent strides in collecting and storing big data challenge us to do a better job of analyzing so we can accurately predict the location of more anomalies. I recommend Nate Silver' "The Signal and the Noise: why so many prediction fail but some don't".